Welcome to Black Sheep, a spin‑off of my serialized memoir, SMIRK. If you’re looking for SMIRK, here’s the link to the complete book. Black Sheep is where I now follow similar themes of fraud and folly in other companies, industries, and individuals.

Like the cursed undead, Builder.ai’s profile lingers on LinkedIn, its pleas to “SET THE RECORD STRAIGHT” echoing in a digital wasteland. The once $1.5 billion startup filed for bankruptcy eleven months ago, insolvent despite initial big backers like Microsoft. The bigger scandal: It billed itself as an AI-based app developer, yet allegedly outsourced work to hundreds of human engineers in India.

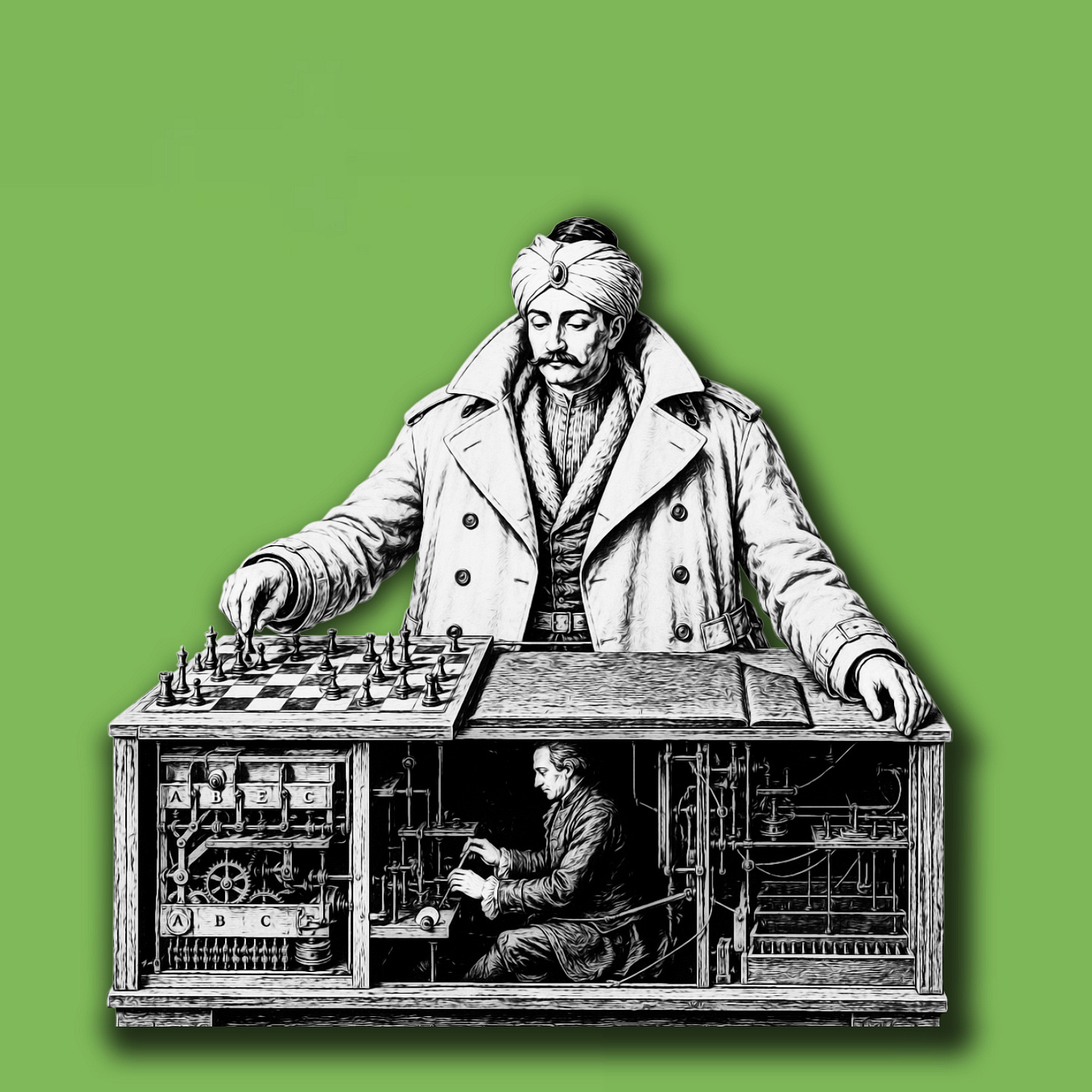

The set-up conceptually resembled a famed 18th-century hoax in Vienna known as the “Mechanical Turk,” in which a mannequin, built for whatever reason to look like a Turkish person, was said to be able to play chess entirely by automation. However, its movements were controlled by a grand master hidden in a compartment through levers and magnets. Fake gearworks of the machine were shown to impress audiences. The human who did all the thinking was not.

The Wall Street Journal initially exposed the discrepancy at Builder.ai in August 2019. At the time, the company called itself Engineer.ai, and the founder, Sachin Dev Duggal, claimed to have an AI bot called Natasha that allowed “anyone to build custom software like ordering a pizza.” But employees told the WSJ the company’s AI code-generation capabilities were more hype than reality. A former chief business officer at the company, who sued for wrongful termination and an assortment of alleged false promises, claimed in his lawsuit that the founder was telling investors the company was “80% done with developing a product that, in truth, he had barely even begun to develop.”

While an obvious reputational problem, this revelation didn’t kill the company. Engineer.ai changed its name and kept going. Meanwhile, LLMs advanced, surpassing the capabilities Duggal had pitched to investors years earlier. Builder.ai eventually did provide the AI code wizardry it had promised — using Claude, not legions of outsourced humans. But by June 2025, when the company failed, the past claims of AI con artistry were resurrected. The Business Standard in India published a short, punchy item: “Builder.ai faked AI with 700 engineers, now faces bankruptcy and probe.” This time, it hit an AI-wary public’s schadenfreude sweet spot, and the news instantly appeared all over the internet.

As with many viral headlines, that one oversimplified the situation. An analysis by the blog Pragmatic Engineer poked holes in the “700 engineers” headline by pointing out that a “Mechanical Turk” scheme would have been ridiculous in 2024 and 2025, when just about anyone could have an LLM spit out code at the touch of a few buttons. Further, as Bloomberg detailed, Builder.ai had far worse problems than being mocked for alleged fake AI output. It was rapidly burned through cash, wasting millions of dollars constructing unnecessary internal versions of widely available tools like Slack. Before its collapse, creditors accused the company of vastly overstating revenue, seizing a considerable portion of its assets.

Yet the company’s LinkedIn death rattles show what caused the most lasting harm: not fudging financials, not wasting cash, but allegedly passing off (however briefly) the fruits of human labor as the output of machines. During the company’s final days, someone — maybe Duggal himself — posted the series of screeds to its account that I referenced at the beginning of this post. The all-caps provide an unmistakable sense that a nerve had been touched

*****

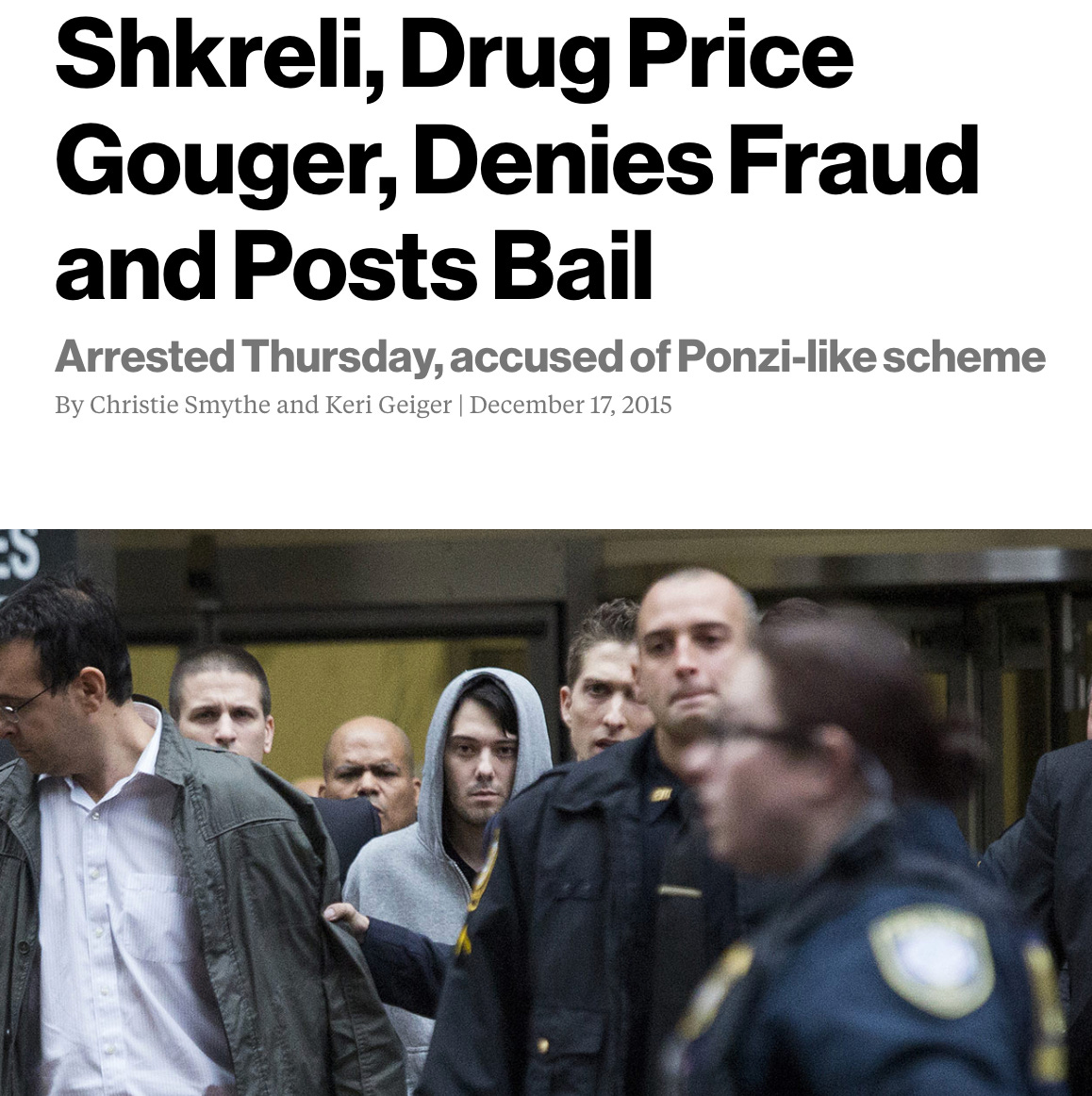

This is a familiar pattern for me. As you can read more about in SMIRK, my multifaceted relationship with “Pharma Bro” Martin Shkreli gave me an unusually detailed view of viral social media cycles. Some of the parallels are striking. Just as with Builder.ai, with Martin, it wasn’t the first “punch,” the reporting on his shocking drug price increase, that was the “knock out,” so to speak. It was the second: his dramatic early morning arrest months later on securities fraud charges.

In the public eye, the two events fused into one plot, complete with images of Martin scowling in a hoodie, paraded by FBI agents. Containing all the ingredients for propulsive outrage, the arrest story instantly swept the internet. It also developed a stubborn distortion. Most people assumed Martin was criminally charged for drug pricing, while the truth was banished to the realm of quirky factoids, the stuff of barroom trivia.

But on a deeper level, the conflation made sense. Drug pricing had evolved into a particularly searing topic by the mid-2010s. It was a concrete element of the vast and amorphous problem of excessive healthcare costs. Martin offered a cocky face onto which people could project their hatred for an entire industry. Regardless of the specific legal charges involved, the arrest had an obvious connection to price gouging in their minds: It was karma. No amount of explanation could change that.

Similarly, Builder.ai collapsed as public sentiment about AI plummeted. Virtually everyone was proclaiming it would widely replace jobs, despite not really performing most tasks as well as skilled human workers, or at least not without substantial guidance from skilled human workers. But corporate executives, as usual, eagerly bought into hype and used it as an excuse to slash headcount. People saw Builder.ai’s predicament as a sign of cosmic justice. A sampling of online comments:

Did no one at Microsoft think to ask why the AI needed chai breaks?

Raise your hand if you’re shocked. No, AI — your third hand.

*****

Now, I’m not denigrating all uses of AI. There are compelling examples of it being integrated into workflows in intelligent ways that actually do make complex tasks faster and easier. But that can take a high degree of coordination between people developing AI tools and the workers who use them, to ensure they are calibrated to appropriate functions. I think we can assume many corporations don’t approach AI rollouts with that level of care.

And that brings me to an irony I couldn’t help but see: While Builder.ai was roasted for allegedly using humans to perform what was supposed to be AI-generated work, lots of other companies today are — in a more subtle way — doing more or less the same thing. Numerous articles have highlighted a stark divide between how much work executives think AI is doing and how much employees are secretly taking on themselves. Often, AI deployments lead to more labor, not less, for remaining human staff, with many putting in extra hours to fix what has been termed “workslop.”

The net effect ends up the same as in the assumption about Builder.ai. The machine serves a performative role, while humans still toil in the background. Only they do even more work than previously, because the executives have decided to either stop hiring or lay people off. We punished Builder.ai for a story about fake AI, while embracing circumstances where AI is real, but its role in many companies is significantly exaggerated. If you think I’m overstating things, be aware that plenty of comedians on Instagram and TikTok have made this same point with far more sting.

This yet again reminded me of the Pharma Bro story. The media spotlight aimed at Martin landed squarely on a pharmaceutical industry practice that had previously escaped widespread attention: acquiring decades-old first-line treatments for deadly diseases, mostly ignored by the industry and lacking any competitors, and jacking up the price. Having seen a dramatic example, people were better positioned to understand and object to further instances of the same tactic by other companies.

With Builder.ai, the claim of its army of 700 human engineers pretending to be AI struck a similar strong chord that people couldn’t forget, sharpening public scrutiny of an entire industry. It was an emperor’s new clothes moment with a new moral: FOMO may drive companies to chase AI, but Builder.ai’s final all-caps shrieks of desperation show that devaluing human workers also comes at a profound cost.